Deploying the Pebble Flow Workflow Server

Deploying the Pebble Flow Workflow Server on Cloud infrastructure.

Deploying Pebble Flow on Microsoft's Azure Cloud

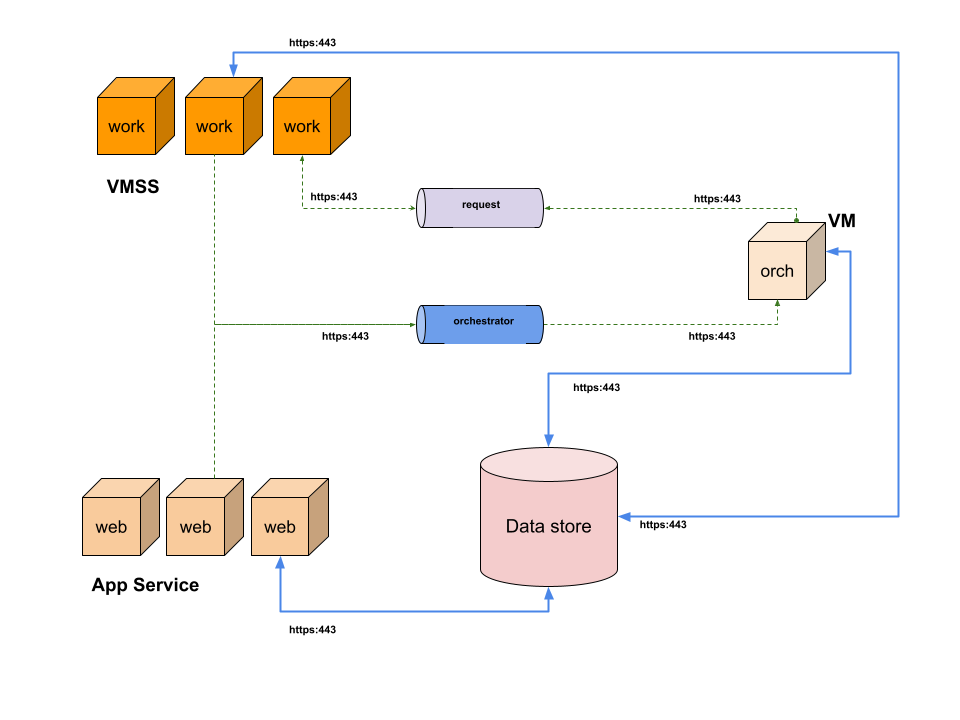

Pebble Flow Architecture

The Pebble Flow workflow server is a collection of deployed resources. Architecturally, it comprises of 4 main resources, a single vertically scalable orchestrator VM, a horizontally and vertically scalable set of worker VMs, and a horizontally and vertically scalable set of web application servers.

The orchestrator VM

The orchestrator is responsible for managing workflow requests. It keeps track of the state of the workflow sending orchestration messages to worker nodes, and managing the worker node responses. There should only be one orchestrator VM deployed at any time.

The worker VMs

The worker VMs are responsible for processing pebble and workflow jobs and requests. There can be as many worker VMs as you see fit, and they can be manually or automatically scaled up and down using Azure Autoscaler combined with custom scaling rules.

Azure VMs come in many shapes and sizes which means that memory allocations of up to 1TB are possible for orchestrators and workers. Most workloads will never need this size of memory, but its good to have the option available should the need arise.

The web application servers

The web application servers are responsible for serving API and web application requests. This includes:

- managing users

- logging in Flow users

- providing admin management screens

- accepting processing requests

- downloading results

- responding to status requests

- creating data sources

- compiling spreadsheets

- building workflows

- managing teams

- managing releases

- managing domains

- connecting with Zen users

- managing request security keys

and other things. The web application servers can be scaled horizontally and vertically, and are currently limited to a total RAM capacity of 256 GB.

The blob key-value store

All architectural components communicate with each other via message passing using the blob store queues. The blob store also serves as a shared persistent data store.

Transport of all data, including writes to the blob store and message passing, is secured via HTTPS.

Flow's architecture comprises a scalable set of workers and web services, blob storage, and a single orchestrator

Create the resource

The Pebble Flow Workflow Server can be deployed as an Azure resource. It is available on the Azure Marketplace. Click on "Create a resource"

Azure services's "Create a Resource" button

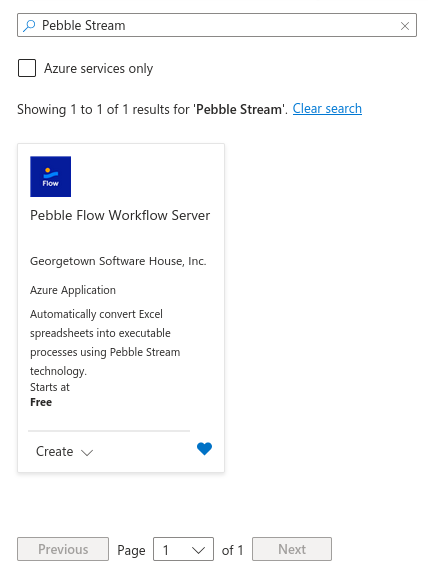

Search for the Pebble Flow Workflow Server

Click on the Pebble Flow Workflow Server card.

Search for "Pebble Stream" to find the Pebble Flow server on Azure Marketplace.

Begin Pebble Flow deployment

Point-and-click deployment via the Azure Marketplace UI is straightforward and completed in three steps. Choose your appropriate plan, then click the blue "Create" button to start configuring the Pebble Flow deployment.

Click on the 'Create' button to begin Azure deployment.

Choose the plan that best fits your organization's needs.

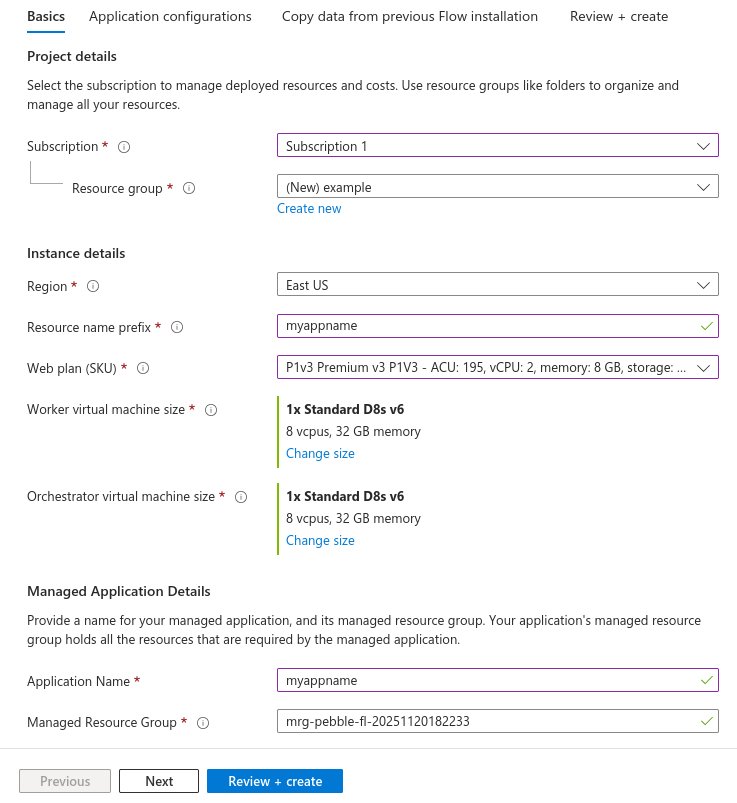

Step 1 - select your deployment subscription and choose resource names

Select your subscription and a unique resource group for deployment in the first step. You should isolate Pebble Flow's deployment from your other Azure resource groups. Choose your App Service plan and select a storage account type that meets your business continuity need.

Choose different plans for the orchestrator, workers, and web application. Note the difference in sizing.

Be sure to choose plans that support autoscaling if you want to use that feature for your workers.

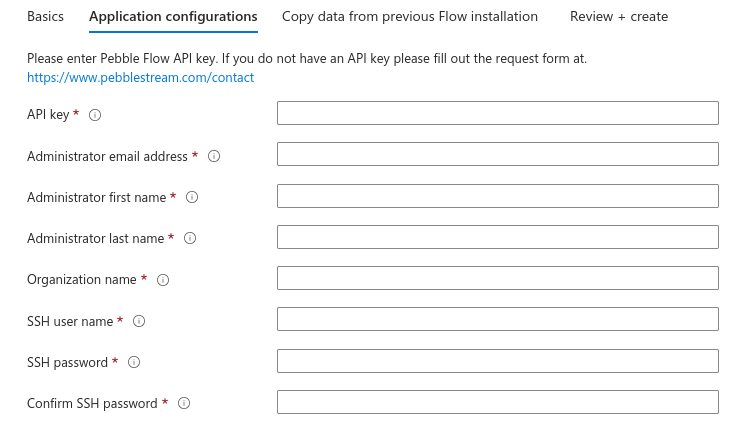

Step 2 - Enter the API key and specify the admin user

Enter your API key (contact us to get this), along with the email address and name of the Pebble Flow administrator. The deployment will automatically create an account for this user. Make sure to enter the correct email address at this step.

Enter a valid API key, specify the administrator's email and name, and choose a secure VM SSH username and password.

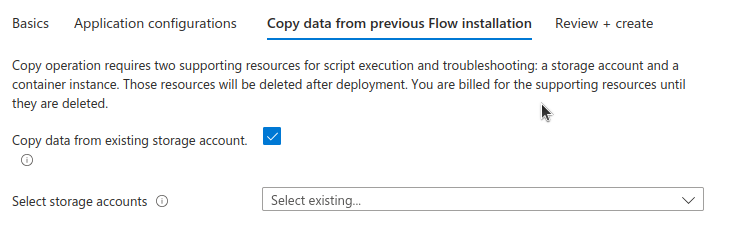

Step 3 - optionally copy metadata from a previous installation.

If there was a previous Pebble Flow installation, you may copy its pebble resources to this new installation. If you don't want to do this, uncheck "Copy data from existing storage account" and proceed to the next step.

Copy users, compiled pebbles, spreadsheets, workflows, authorizations, and API endpoints to the new installation.

Request and job results from the previous install are not copied.

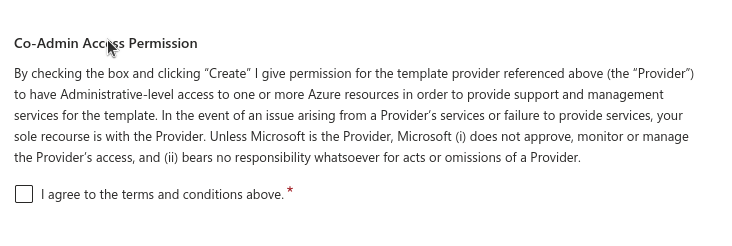

Review configuration, accept terms, then deploy

On the final screen, you must agree to the terms and conditions for using Pebble Flow on the Azure Marketplace.

Agree to the terms and conditions for running resources procured from Azure's Marketplace.

The Pebble Flow Workflow Server deployment should take 30 minutes or less.

Autoscaling Flow Web Servers and VMs on Microsoft's Azure Cloud

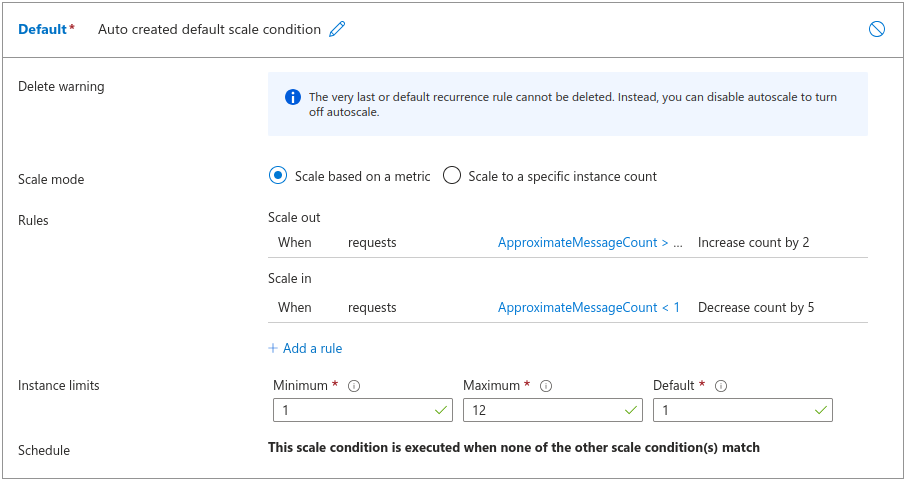

Pebble Stream Flow is designed to run as a dynamically distributed service on Azure. Azure App Service's built-in autoscaling can help manage costs. Pebble Stream Flow's API uses Azure Blobstore's queues to manage request traffic, and Azure App Service is designed to monitor queue traffic for scaling cues.

Configuring Azure App Service autoscaling via the portal is simple.

You can use Azure's portal to configure scaling rules based on pending Pebble Flow request message counts.

One way to configure your scaling in the Azure portal is to scale up the number of Pebble Stream Flow workers when the average number of pending requests exceeds a threshold. Scaling down could be triggered by completely draining the request queue. Of course, the maximum number of servers can be capped, regardless of the number of pending requests.

For Web Apps, the principal resource drain is data downloads, and scaling can be configured based on the number of pending HTTP requests.

Make sure to choose an App Service sku that supports autoscaling. If you don't, you can always change your plan.

Updated 6 months ago